This is a follow-up to the previous ring sizer report. It documents the new upgrade, in which the two classical CV stages most responsible for real-world failures — a standard-size card detection and hand segmentation — were replaced by Meta’s Segment Anything, while keeping the entire pipeline local, CPU-only, and under 5 s of model compute per image.

- 🚀 Live Demo: sizer.femometer.com

1. Background

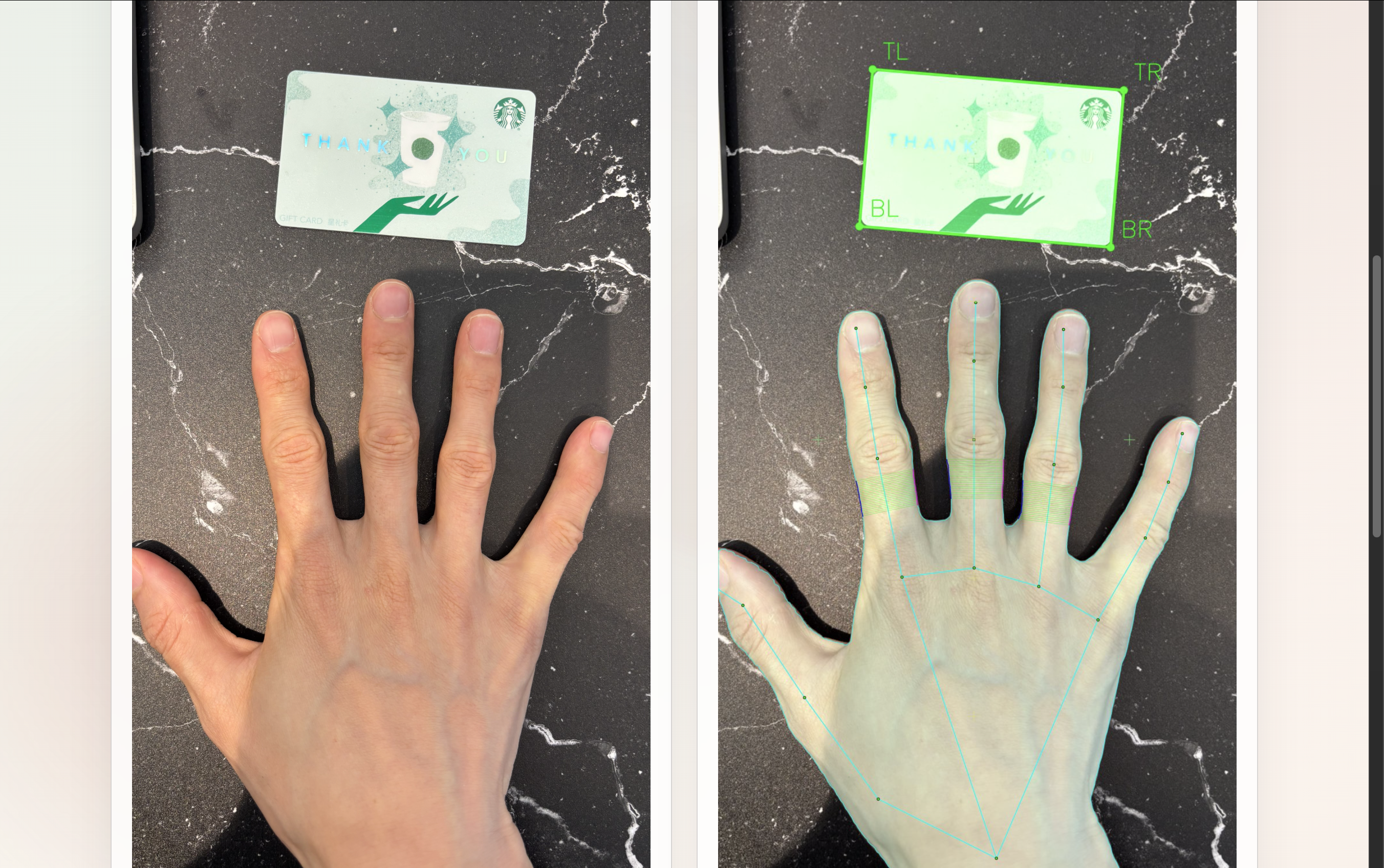

The earlier reports’ ±0.5 mm accuracy numbers were measured under lab conditions: a tripod, a ring flash, a clean white sheet of paper, and a standard-sized card placed coplanar to the hand on a uniform background. Real users do none of this. A photo from the wild looks like:

- Cluttered, busy backgrounds — a wood desk, a patterned tablecloth, a laptop keyboard. The card is no longer the only large rectangle in the frame, and “find the cleanest rectangle” stops being a useful prior.

- Hard shadows directly alongside the finger edge — instructions tell the user to turn the flash on; users routinely ignore that. Without flash, the finger casts its own shadow a few millimetres from the true skin boundary, parallel to it. To a Sobel gradient detector this shadow is itself a strong, well-defined edge — and often a stronger one than the actual skin/background transition.

Both card and finger edge detection failures gated the project’s goal of a robust tool for real-world application. The premise of 2.0: replace both with a foundation segmentation model, keep everything else.

2. SAM and Model Size Choice

| Constraint | Choice | Reason |

|---|---|---|

| License | SAM 2.1 (Apache 2.0) | Safe for public Spaces hosting. SAM 3 is research-only. |

| Size | Hiera Tiny → Hiera Small (~150 MB) | Fits HF free-tier CPU. Tiny and Small are visually indistinguishable on this task (IoU 0.987 vs 0.982 on the comparison image), so the upgrade was free in memory but slightly cleaner around nails. |

| Inference mode | Prompt-based, not AMG | A single decoder pass on a point prompt is ~30× cheaper than the 256-prompt automatic mask grid we initially used for card detection. |

| Training | Zero-shot | No fine-tuning; the model is used as-is. |

SAM 2.1 Small inference on CPU (Apple Silicon M5): ~0.5–0.7 s per prompt-based call.

3. Hand Mask Generation

For the hand, we have a strong prior — MediaPipe gives us 21 landmarks, including five (wrist, MCP of each finger) that lie firmly inside the palm. So instead of AMG, we feed SAM a single positive point at the palm centre (mean of landmarks 0, 5, 9, 13, 17).

A side-by-side experiment on a representative test image:

| Backend | Time | Mask quality |

|---|---|---|

| MediaPipe (current) | 0.2 s | Poor — convex polygon, doesn’t trace fingers, nails, or inter-finger gaps |

| SAM 2.1 Tiny (point prompt) | 0.6 s | Pixel-perfect. Every finger, nail, and the ring on the middle finger traced cleanly. IoU 0.987 |

| SAM 2.1 Small (point prompt) | 0.7 s | Visually indistinguishable from Tiny. IoU 0.982 |

4. Prompt-based Card Detection

Once hand segmentation had proved that prompt-based SAM was both fast and accurate, the same pattern was applied to card detection. The trick was finding seed points without knowing where the card was.

Solution: use the hand mask as a negative-space prior. Run hand segmentation first (~0.5 s), then sample a 5×5 grid of points on the background (outside the hand mask) — the card is somewhere on that background. For each seed:

- One positive prompt at the seed

- Negative prompts at every other seed: “segment the thing here, not the things over there”

SAM returns a handful of candidate masks per seed; the same rectangularity / aspect-ratio / area filters pick the card.

5. Architecture After the Upgrade

Image

→ MediaPipe landmarks

→ SAM hand mask (palm-centre prompt)

→ SAM card mask (background-seed prompts)

→ Per-finger isolation against the SAM mask

→ Landmark-based axis (MCP→PIP, proximal phalanx)

→ Ring-zone localisation (anatomical)

→ Width measurement: SAM mask boundary

→ Confidence (4-component) → JSON + overlay PNG

What changed structurally

- Shared SAM backend (

src/sam_backend.py) — a singleSam2Model+Sam2Processorsingleton, lazily loaded, used by both card and hand stages. One encoder, one set of weights in memory. Trieslocal_files_only=Truefirst to avoid HEAD-request retry storms on flaky networks. - Pipeline reordered — hand mask first, card seeds derived from it, then card. This is only viable because the hand SAM call is now cheap (0.5 s) and accurate enough to be a reliable negative-space prior.

- MediaPipe is now landmarks-only — its mask output is no longer consumed anywhere in the measurement path. It is still required to detect the hand and provide 21 landmarks; if MediaPipe fails, the pipeline fails before SAM is invoked.

6. Performance and Footprint

| Stage | CPU time (Apple Silicon M5) |

|---|---|

| Image quality + MediaPipe | ~0.2 s |

| SAM hand mask | ~0.5 s |

| SAM card mask (prompt) | ~1.3 s |

| Finger isolation + axis + zone | <0.1 s |

mask edge measurement | <0.05 s |

| Visualisation + JSON | ~0.1 s |

| Total end-to-end | ~2.2 s |

Memory: shared SAM 2.1 Small encoder ≈ 150 MB resident. First run downloads weights from HuggingFace (facebook/sam2.1-hiera-small); subsequent runs hit the local cache.

The end-to-end CPU budget on commodity Apple Silicon is comfortably under 3 s. HF free-tier CPU is slower; the prompt-based path should still fit inside the 5 s public budget, but final latency on that hardware is the next thing to benchmark.

7. Takeaways

1. Foundation models are not always the answer — but when two stages of classical CV both stop working in the same kinds of photos, they often are. The earlier reports’ accuracy work was real and valuable. 2.0’s premise was different: the failures we still saw in real-world photos were not measurement-precision failures but detection failures, where the input to the precise measurement was simply wrong. SAM directly addresses that class.

2. Prompt-based inference changes the deployment story. A 30× speedup over AMG (0.6 s vs 18–20 s) is the difference between an academic regression script and a Hugging Face Space. It also makes it cheap to run SAM twice per image — once for the hand, once for the card — using one as a prior for the other.

Net result: a measurement pipeline that no longer needs the user to find a white sheet of paper. The lab-condition accuracy from the earlier reports still holds when the conditions hold; what’s new is how far the conditions can drift before the pipeline gives up. Recalibrating the linear bias correction on top of the cleaner SAM-derived measurement is the next obvious piece of work.